Infino Enterprise AI Stack.

In our commercial offering, we provide a full AI stack on top of our core engine that reduces the cost of large-scale search, observability, and analytics — UI, RBAC, the Fino data agent, connectors, and federated search — inside your VPC or air-gapped environment. Use internally or customize for reselling in your channels.

What ships

in the stack.

Each component runs against the same identity, policy, and deployment model. No glue layer between them.

- 01

UI

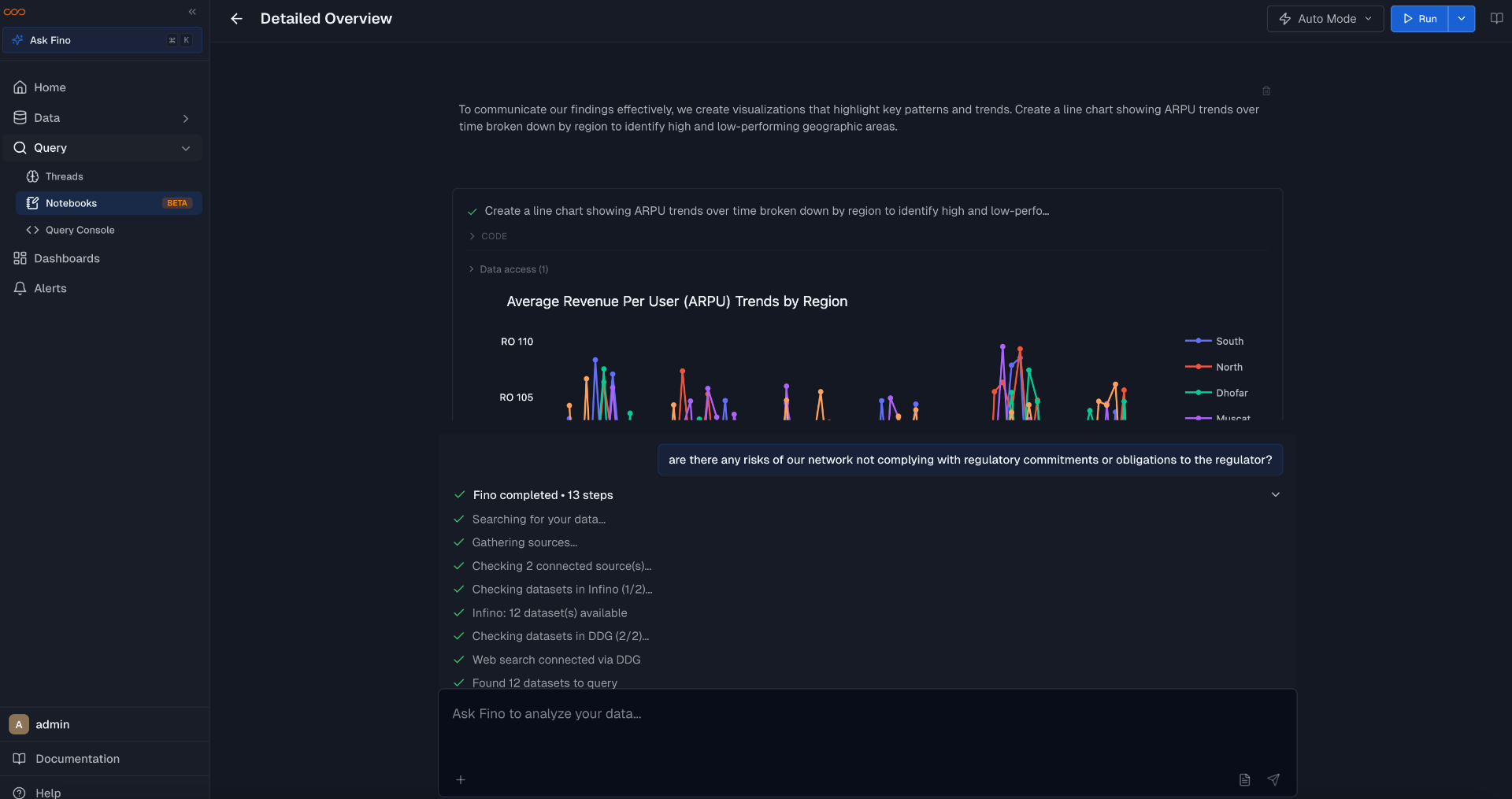

Notebooks, generated dashboards, and threaded chat. Embeddable via script tag or iframe.

- 02

Fine-grained RBAC

Row-, column-, and action-level policies enforced in the engine. Same model for users and agents.

- 03

Fino data agent

Compiles queries against your semantic layer. Returns inspectable plans and cited rows. Runs under the same RBAC.

- 04

Data connectors

Snowflake, Databricks, BigQuery, Postgres, Elasticsearch, Prometheus, S3, Kafka. Query in place.

- 05

Federated search

Hybrid BM25 + ANN across Parquet, warehouse tables, and OLTP. One query plan, merged results.

- 06

Elasticsearch compatibility

Query DSL-compatible API layer. Apps, dashboards, and tooling built against Elasticsearch or OpenSearch point at Infino without a rewrite.

UI surfaces

Notebooks for analysts, generated dashboards for execs, threaded chat for ad-hoc questions. All three render against the same query engine and respect the same RBAC. Embeddable in your application via script tag or iframe.

Fine-grained RBAC

Roles, scopes, and row- and column-level filters enforced inside the query engine. Agents authenticate with scoped credentials and resolve through the same policy path as users.

sdk.create_role('analyst_eu', {

'datasets': ['contracts', 'cases'],

'columns': {'contracts': ['-ssn', '-salary']},

'row_filter': "region = 'EU'",

'actions': ['read', 'query']

})

sdk.assign_role(agent_id, 'analyst_eu')Fino data agent

Fino plans against the semantic layer, compiles SQL/search queries, and returns answers with cited rows and a replayable trace. It runs through the same identity and RBAC as a human user — no service-account back-channel.

sdk.send_message({

'thread_id': thread['id'],

'content': {'user_query':

"Top 10 contracts at risk of breach this quarter,

by jurisdiction"

}

})

# returns: compiled plan, cited rows, replayable traceData connectors

Snowflake, Databricks, BigQuery, Postgres, Elasticsearch, Prometheus, S3, Kafka. Sources are registered and queried in place. No nightly copy job, no second source of truth.

sdk.create_connection('snowflake', {

'account': 'acme-prod',

'warehouse': 'ANALYTICS_WH',

'database': 'CONTRACTS',

'auth': 'oauth'

})Federated search

Hybrid BM25 + ANN across Parquet on object storage, warehouse tables, and operational stores. Results are merged, ranked, and returned with source lineage.

results = sdk.federated_search(

query="termination clause AND active litigation",

sources=['contracts_s3', 'snowflake.cases',

'es.support_tickets'],

hybrid={'bm25': 0.6, 'vector': 0.4},

top_k=20

)Elasticsearch compatibility

A Query DSL-compatible API in front of the Infino engine. Apps, dashboards, and tooling built against Elasticsearch or OpenSearch point at Infino without a rewrite — same queries, same client libraries, Parquet on object storage underneath.

# existing Elasticsearch client, new endpoint

es = Elasticsearch('https://infino.acme.internal')

es.search(index='contracts', body={

'query': {

'bool': {

'must': [{'match': {'body': 'termination clause'}}],

'filter': [{'term': {'region': 'EU'}}]

}

},

'size': 20

})